FEATURE – This student’s research confirms that lean efforts stall when culture lags. Case evidence shows fear, poor communication and top-down tools breed resistance, but participatory leadership fosters resilient lasting outcomes.

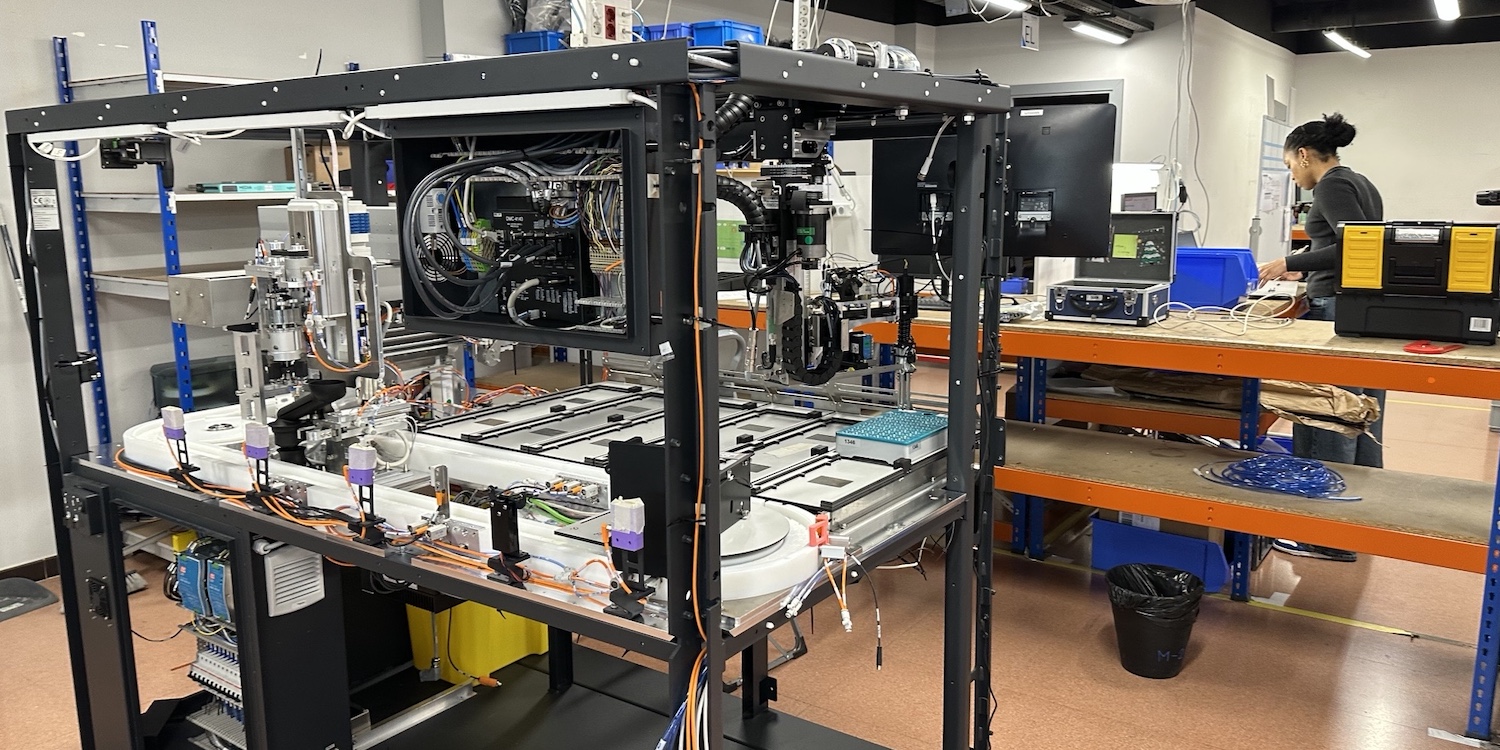

INTERVIEW - Our editor sits down with the General Manager of Sensata Technologies to learn how they have been blending culture, leadership, and digital tech to drive agility in global sensor manufacturing.

CASE STUDY – Lean allowed NGNY to evolve from creative improvisation to structured performance by redesigning operations and standardizing processes – achieving reliable deliveries, built-in quality, and a cultural transformation.

CASE STUDY – Italian company El.Co. leveraged digitalization to overcome some key limitations of its manual kanban system while maintaining its pull system intact.

FEATURE – Thanks to Lean, EHA Clinics improved safety, efficiency, and patient experience. Now they’re hoping their results will inspire a growing movement to transform healthcare across Nigeria.

CASE STUDY – Facing costly inventory errors, Hidrogística transformed its warehouse operations by engaging its team, standardizing processes, and building a culture of ownership – reducing discrepancies from 5% to near zero.

FEATURE – The author shares his thoughts about the thinking that informs Uri Levine’s book and explains why this is perfectly align with a lean mindset.

FEATURE – Our editor attends a Lean Day at Dreamplace Hotels & Resorts in Tenerife. In this report, he shares his thoughts about team building, following up on improvements, and yokoten.

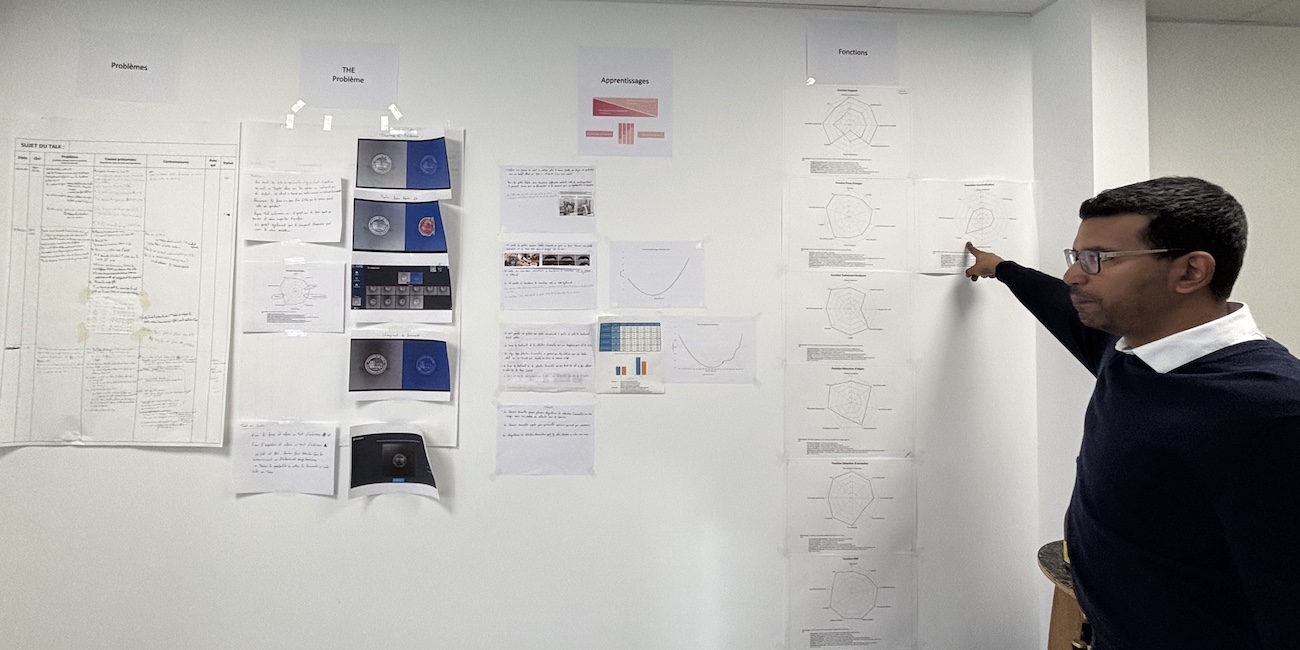

CASE STUDY – This French firm transformed its engineering approach through continuous learning, radical redesign, and user-driven innovation, evolving into a lean, resilient company with a strong culture of development.

FEATURE – The author explores how SKC embraced Lean Thinking as a cultural shift – one that empowers people, transforms leadership, and shows how true sustainability starts with human growth.

NOTES FROM THE GEMBA – Somfy’s R&D department is applying Lean Thinking to close knowledge gaps, improve collaboration, and boost innovation and technical competence.

FEATURE – Learn how Lean Thinking and the establishment of Communities of Practice are transforming automotive reconditioning at Aramis Group.

FEATURE – To accelerate learning and change, don’t copy blindly—observe, adapt, and apply with purpose. That’s how yokoten drives real transformation, argues the author.

FEATURE – Embracing lean is like painting: tools are colors, respect for people is the brush, and true change blends both with intention.

FEATURE – Lean Thinking remains vital—but must now adapt to disruption with resilience, flexibility, and human-centric systems, says the author.

NOTES FROM THE GEMBA – What’s kaizen for? Improving quality? Generating a flow of ideas? Challenging a method? All of the above, so long as the underlying goal is to make people’s lives easier.

INTERVIEW – A new book on hoshin kanri is out! We sit down with the author to learn about the publication and how it can help organizations.

INTERVIEW – Learn how Lean Thinking helped GE Appliances improve performance, develop people, and build a resilient, customer-focused, and learning-driven organization.

FEATURE – Many lean initiatives fail because managers lack an understanding of how adults learn. The authors discuss why execs need clear strategies that link improvement efforts to human capital development.

FEATURE – This article explores the benefits of an electronic kanban and explains how it enables a truly lean supply chain.

INTERVIEW - On April 18th, Lean Global Network and KD Consulting will hold a not-to-be-missed event in Ho Chi Minh City for the Vietnamese lean community. In this Q&A, Tony and Khoa tell us what to expect from the summit.

CASE STUDY – This nickel smelting operation in Indonesia used the Lean Transformation Framework to optimize furnace inspections and minimize delays.

CASE STUDY – Learn how Knauf, a global provider of prefabricated materials, regained its sales leadership in Brazil, impressively optimized its performance indicators, and transformed people's mindset and behaviors.

FEATURE – Focused experimentation and kaizen can lead to impressive results in as little as one day, as the story of this Hungarian company shows.

INTERVIEW – Dave Brunt tells us about the upcoming UK Lean Summit, one of the year’s must-attend events for lean practitioners.

CASE STUDY – Lean Thinking helped Bernard Controls Europe improve efficiency, reduce lead times, and enhance quality, fostering resilience in the post-pandemic industrial landscape.

CASE STUDY – A Scaling Kaizen initiative at Veolia Water Information Systems engaged 45 teams in Lean IT practices, improving delivery, incident reduction, and fostering talent.

CASE STUDY – The story of improvement at China-based asset management company Hengbaishi is a great reminder of the universality of lean principles.

OPINION – DeepSeek’s AI innovations can be compared to the disruption Toyota brought to automotive, showcasing efficiency, problem-solving, and value-driven adaptability over resource-intensive methods.

FEATURE – Collaboration with suppliers, rather than a merely transactional relationship, has to power to transform a company’s operations, fostering innovation and building resilience.

FEATURE – Attracting, developing and retaining talent has become a pressing issue in the corporate world, as new generations show skepticism towards traditional management. Lean is the answer, say the authors.

FEATURE – To boost the “adoptability” of Lean and sustain it over time, it’s necessary to develop institutions that support our improvement efforts without making processes more bureaucratic.

FEATURE - With heavy hearts, we share the news of the passing of Peter Walsh, a pioneer of the Lean Community. Peter's work was instrumental in allowing Lean Thinking to take root in Australia, as some of those who knew him tell us here.

FEATURE – This article explores the integration of lean leadership behaviors with digital tools to achieve sustainable performance improvement in modern organizations.

FEATURE – In this new series, the author taps into LEI Hungary’s experience with lean transformations to highlight the most common mistakes people make when approaching the A3 framework.

INTERVIEW – “Dr Fred” is an amazing leader, who led one of the most successful lean healthcare transformations this magazine has come across. In this Q&A, he talks to Planet Lean about his new book, which is result of over 15 years of lean learning.

CASE STUDY – The authors discuss how rooftop window producer VELUX harnessed Lean Thinking and Industry 4.0 to empower shop floor workers, after rediscovering the kaizen spirit following a digitalization initiative that didn’t work out.

FEATURE – In this article, Michael discusses the four levers that organizations can work on to thrive in good times… and bad times.

CASE STUDY – UK law firm Weightmans has transformed its new legal case intake process using the Lean Transformation Framework, revolutionizing both operations and people development.

FEATURE – Ahead of his presentation at next week’s Lean Global Connection, Jim Womack explains why Lean is made for a world in turmoil but urges us to strike a balance between immediate response and thorough understanding of the problem.

FEATURE – What is psychological safety? What is it not? This article explores its advantages and foundational elements, and how organizations need to transform their leadership to achieve this evolution in management.

CASE STUDY – Thanks to a commitment to Lean Thinking and a solid coaching system, this hospital in China has been able to initiate a remarkable transformation.

INTERVIEW – As their book on daily management is released in the US in English, we talk to the authors about the thinking behind the book and the lessons learned since its launch in Brazil three years ago.

INTERVIEW – Ahead of the Lean Global Connection 2024, we hear from Kruk S.A. in Poland to learn how they leveraged “centres of excellence” to break down company silos.

BOOK EXCERPT – These are the opening paragraphs of Chapter 1 of the new Lean Global Network book about applying Lean Thinking in a resort hotel.

FEATURE - Projects or programs? The authors discuss why Lean Thinking should go beyond piecemeal operational improvements, as a transformative strategy that balances today’s demands with future adaptiveness.

FEATURE – Our editor learns about the lean work taking place in two successful omelette-based restaurants in the Netherlands.

FEATURE – We see the shortcomings of strategies based solely on financial metrics every single day. The author discusses how Lean Thinking offers an alternative that leads to better and more sustainable results.

FEATURE – In part 2 of his article, the author unveils an improved process that allows this Hungarian company to replace machine parts with minimal production downtime.

FEATURE - Presenting the key concepts in her latest book, the author tells us how to escape the feature frenzy trap and build products customers love.

CASE STUDY – This Brazilian agrobusiness has developed a unique and clever management system that puts people at the heart of the work.

EVENT LAUNCH - Save the date! The Lean Global Connection 2024 will take place on November 21 and 22. In this article, our editor introduces the event's theme and tells us what attendees can expect from the largest online lean event in the world.

INTERVIEW – Lean is not widespread in Colombia’s construction industry yet. The interviewee discusses the benefits this philosophy can bring to construction sites.

FEATURE – There is more to logistics than an ancillary process that supports the rest of the company. But then, why do we never have a plan for it, like we do for manufacturing?

INTERVIEW – A recently-published book discusses how Lean is the only way for a company to scale while retaining an Agile culture. We talk to one of the authors.

FEATURE - This month’s Design Brief explores the concept of craftsmanship in design and engineering, how to cultivate it in individuals and organizations, and the benefits of pursuing excellence.

FEATURE – Taiwan-based Machan International Co. manufactures sheet metal products, tool storage and medical trolleys, and smart energy storage cabinets. Last year, they turned to lean to improve their product development process.

BOOK EXCERPT – In the introduction to his new book, Art Byrne reflects on his lean journey and explains why to understand lean one must change their mindset.

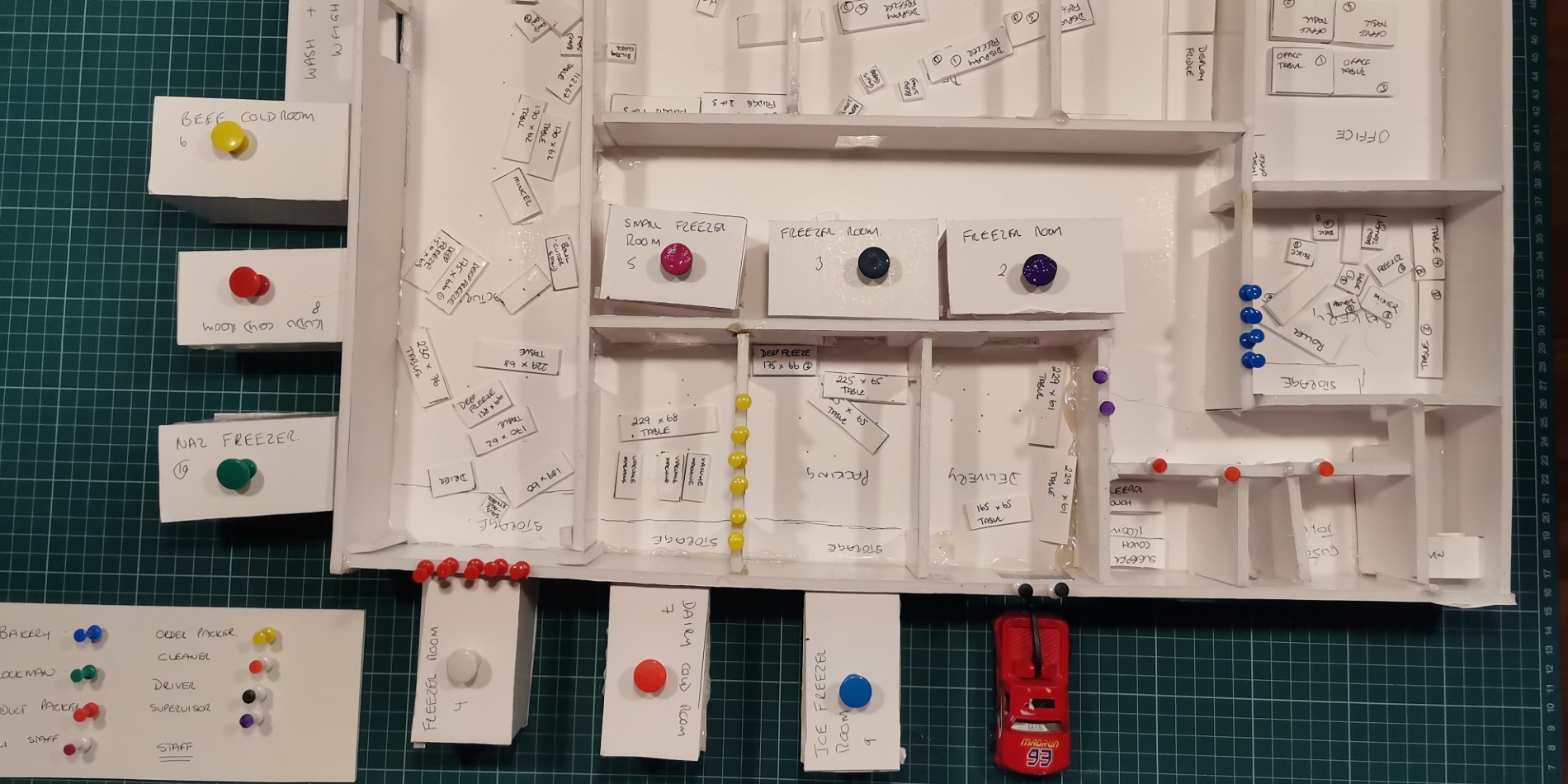

FEATURE - Looking back to her experience coaching teams in Africa, the author encourages us to introduce play in our work, as a way of teaching people and of unlocking creativity.

FEATURE – To successfully transform, we need to change our “theory of success”. The authors discuss the obstacles managers face along the way.

FEATURE - The Basic Thinking dimension of the Lean Transformation Framework is often the most neglected and the least understood. The author tries to shed a light on the subject.

FEATURE – Faced with increased production volumes, this Dutch company realized the need to improve its processes by developing people skills.

FEATURE – A situation that would be unthinkable in a sports context is an every-day occurrence in many of our organizations. Should we change our way of thinking about our machinery?

FEATURE – A bad management system generates distortions that represent the root cause of many of the problems experienced by organizations.

FEATURE – Variety in sensei approaches can cause a lot of confusion. The author sheds light on the subject by describing a sensei’s five modes of interacting with individuals and teams.

CASE STUDY – Faced with safety and quality issues, this Brazilian manufacturing plant installed a dojo, and the results are very promising.

CASE STUDY – Manuelita Sugar Mill in Colombia adopted Daily Management to bolster leadership effectiveness and operational outcomes, addressing siloed thinking and communication challenges.

FEATURE - In this article, taken from LEI's Design Brief, Jim Morgan talks about the often-overlooked topic of building quality into new products. Learn how a customer-centric approach in development can prevent costly rework and build lasting trust.

INTERVIEW - In this interview, Lynne Smith discusses the potential and difficulties of applying Lean Thinking to NGOs and looks back at the lean transformation at the Gates Foundation.

FEATURE – In February, our affiliate in the Netherlands turned 20. The President of Lean Management Instituut reflects on this achievement and looks back at these two decades.

FEATURE – To get the results we want from a system, or prevent it from generating undesirable events, we need to understand how it works and behaves in the real world. That’s exactly what Jidoka does.

FEATURE – The successful project discussed in this article shows how Lean Thinking can bring huge benefits to small and medium enterprises and paves the way to a new way of looking at lean coaching.

FEATURE – This article applauds the tenacity of women leaders and seeks to illustrate how they motivate others to take on the sustainability challenge through lean practices.

FEATURE – When it comes to the fight against climate change, we can’t expect to achieve much until we fundamentally challenge the way we think about resource consumption. Lean is our tool to do that.

FEATURE – The author highlights the critical “power skills” leaders need to provide the right support to the transformations they lead.

FEATURE - The editorial board members of Planet Lean reflect on the magazine's origin story and its role within and contribution to the Lean Community.

FEATURE – Ten years ago, Planet Lean was launched as a new platform for the Lean Community to share its experiences and knowledge. Our editor reflects on the magazine's mission, history and contribution of the spread of Lean Thinking.

INTERVIEW – The UK Lean Summit 2024 is coming up in April. We speak to Dave Brunt to hear about the event and what we can expect from the presentations.

FICTION – In the second and final part of this story, the main characters discuss in front of a board. If you regularly use visual management, their conversation will resonate with you.

INTERVIEW – We speak with avianca’s VP of Maintenance and Engineering to learn about the efforts the airline is making to fulfil its promise to passengers.

FEATURE – To develop a lean culture means to develop each lean thinker and help them reach their full potential. Here’s how Aramis Group does it.

CASE STUDY – Following a positive experience in manufacturing, this Colombian company brought lean to the rest of the organization. Its goal? To become a reliable partner for its clients in the national and international markets.

FICTION - Part 1 of this story reminds us that the role of leaders in an organization is to set the example for others, behaving the way they want the rest of the company to behave.

FEATURE – Nobody understands humans as well as humans do, and Jidoka is the key to learning about your customers and create ever-better products and services.

FEATURE – In this article, you’ll understand how potential errors can become an endless source of personal and managerial growth.

FEATURE – By adopting a product takt time perspective, we can better align engineering decisions with market demands, leading to better design, manufacturing, and material choices.

FEATURE – How does the improvement department of a company become a hindrance to the transformation? The author answers this question and discusses how this can be avoided.

FEATURE – If you think one is born a leader, think again. Leadership can be learned and, in this article, the author provides a four-step guide to developing it.

CASE STUDY – The story of Norwegian furniture manufacturer Haugstad Møbel that, thanks to Lean Thinking, was able to transform its culture and unlock the potential of sustainable growth.

WEB SERIES - In the last episode of our It's a Lean World series, we travel to the tip of South America to visit the southernmost Distribution Center in the world and learn about its lean work.

FEATURE – On October 31st, the Lean Movement lost one of his pioneers. In this piece, Dan Jones reflects on Freddy Ballé’s legacy.

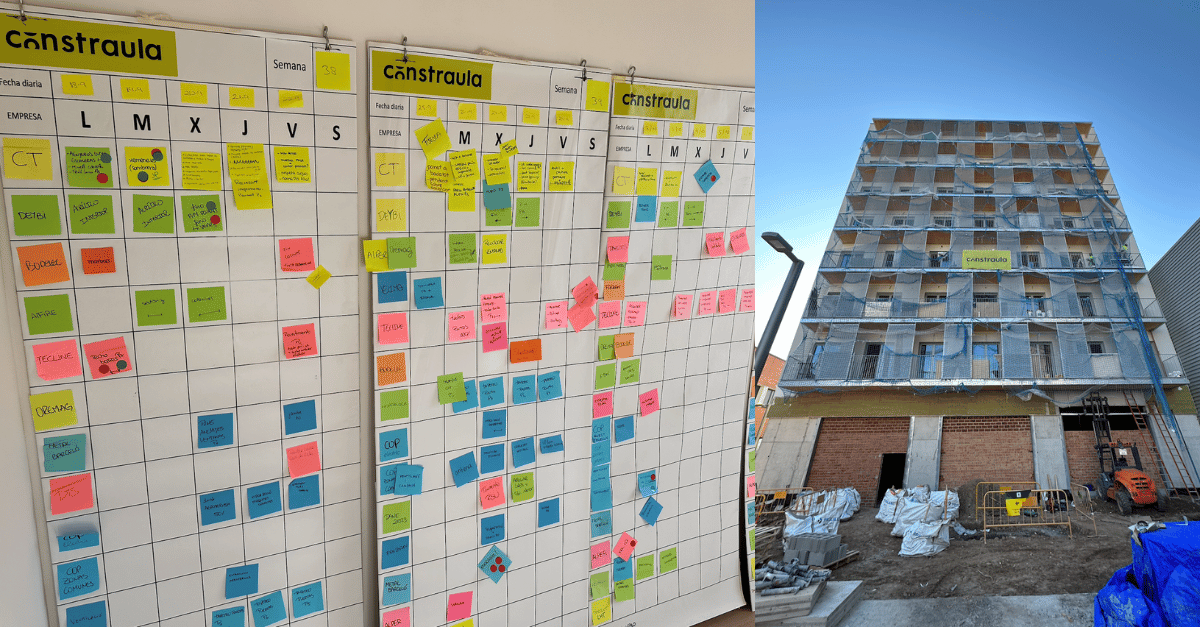

CASE STUDY – Thanks to Lean Thinking, this Spanish construction company was able to deliver a challenging project in one year, well ahead of schedule. Here’s how they did it.

EVENT - The Lean Global Connection is around the corner! Our editor takes you through some of the key highlights you can expect and reminds us of the idea behind the largest online lean event in the world.

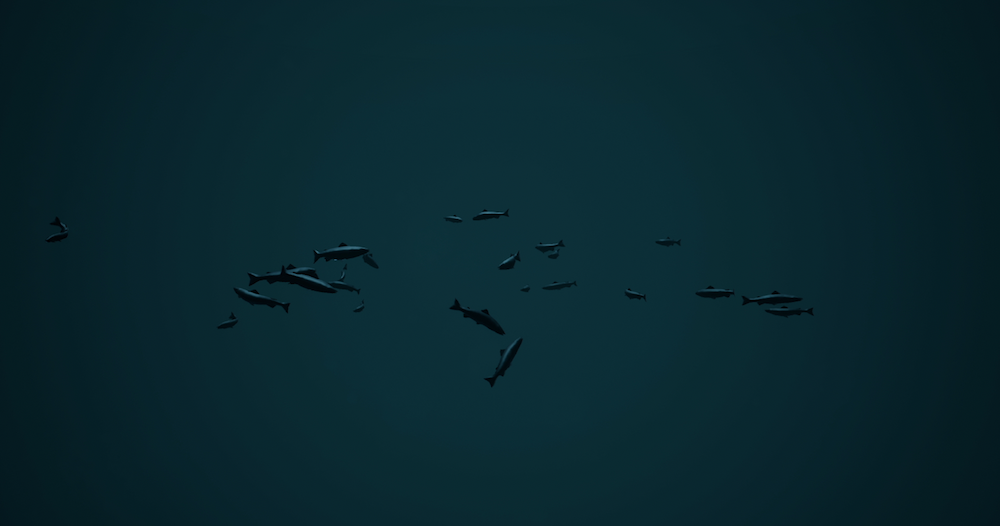

WEB SERIES - In this episode, we head to Chilean Patagonia to visit a manufacturer of fish feed that turned to Lean Thinking to improve yield and eliminate inventory gaps and stock-outs.

FEATURE – The author explores methods to expand your flow “sweet spots”, enhance focus, and achieve a state of effortless productivity.

FEATURE – This insightful piece explores the true meaning of problem solving, looking at the common mistakes leaders make when they adopt some of its key practices.

FEATURE – We are used to approaching strategic thinking as if our organization was in a position of stability and dominance. What if we started to look at it as creating better deals with all parties involved?

CASE STUDY – When it had to develop a production process for a new product from scratch, this Dutch company leveraged lean tools and practices. And the results were impressive.

FEATURE – Waste in the agricultural sector is a significant threat not only to the environment, the economy and farmers, but also to food security around the world.